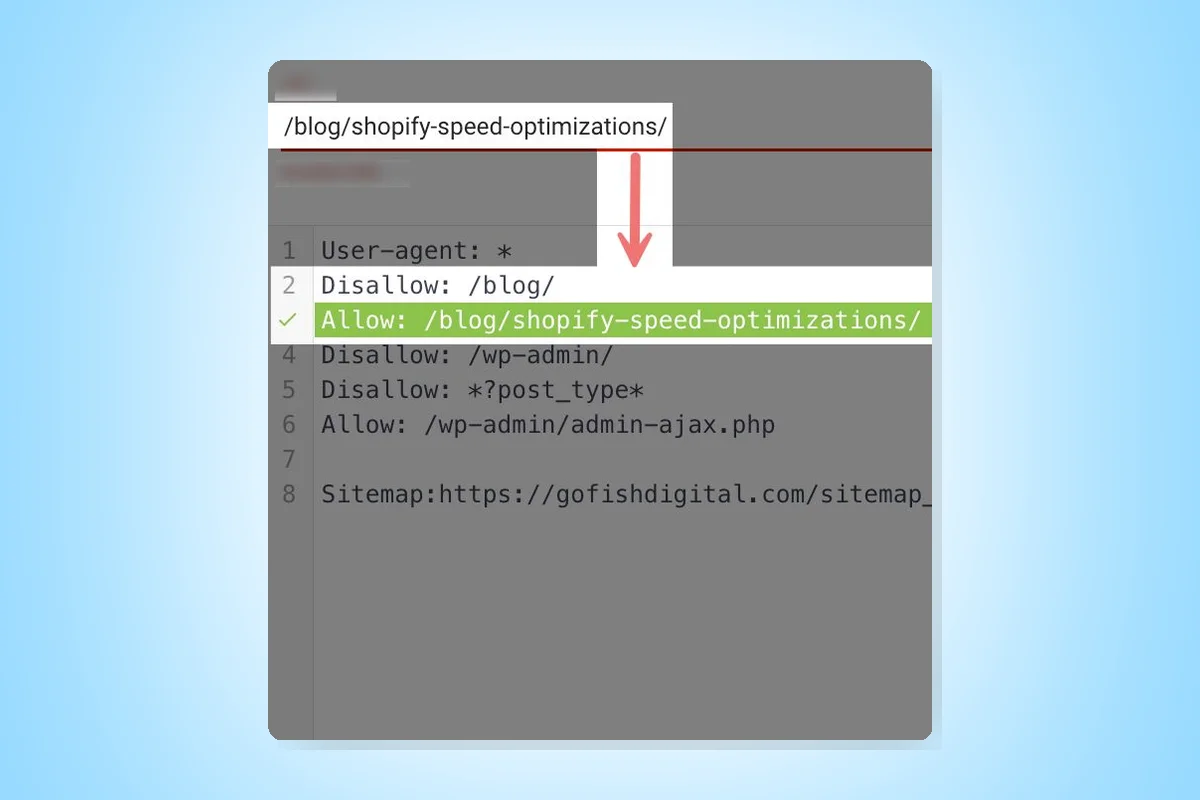

In Technical SEO, the robots.txt file follows the most specific rule set. If two commands conflict, the more direct one is used. For example, if you have two commands in your robots.txt file:

Disallow: /blog/Allow: /blog/shopify-speed-optimizations/

Despite the conflict, Google will crawl the /blog/shopify-speed-optimizations/ as it's the more specific command.

The same logic applies to the user-agents you set. For instance, if you have two commands:

- All user-agents cannot crawl the blog

- Googlebot can crawl the blog

The result would be that Googlebot is allowed to crawl the blog as it's the most specific user-agent, while all other user-agents would ignore it.

This principle can be leveraged to make exceptions to large general rules, often using the "Allow" command to bypass a broad rule and permit search engines to crawl something specific.