Microsoft blocks AI recommendation poisoning attacks targeting Copilot assistant

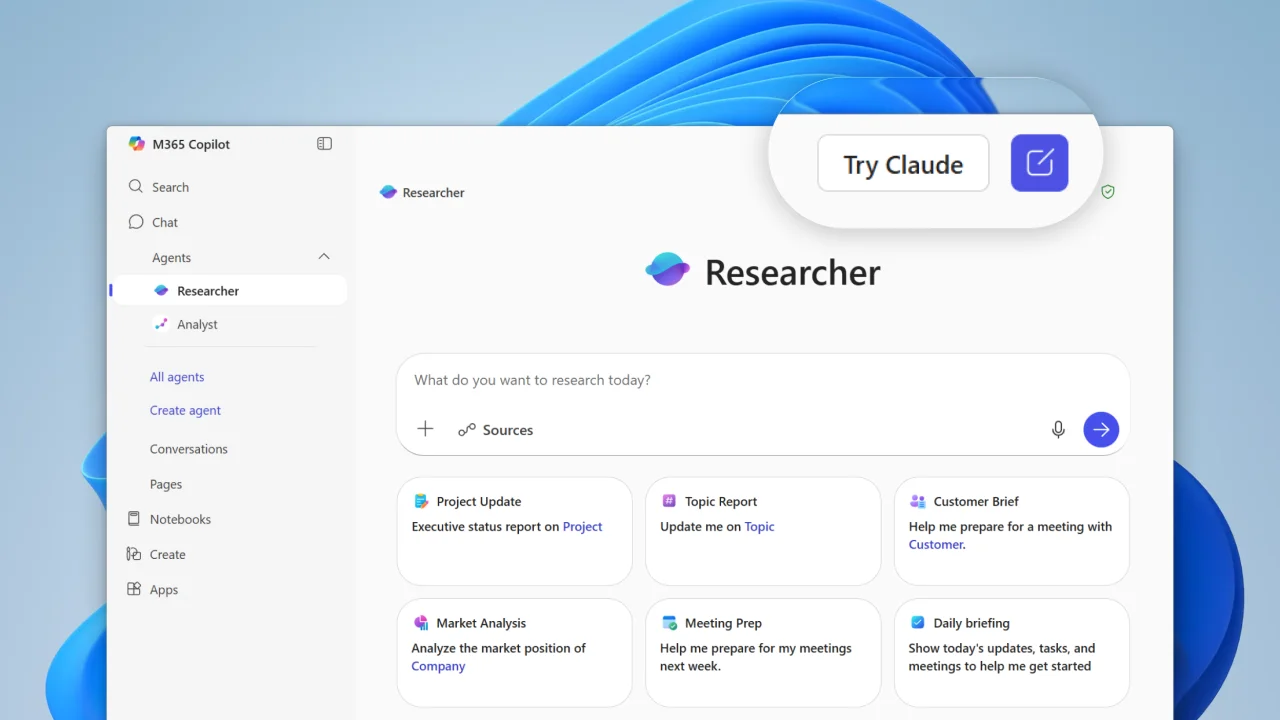

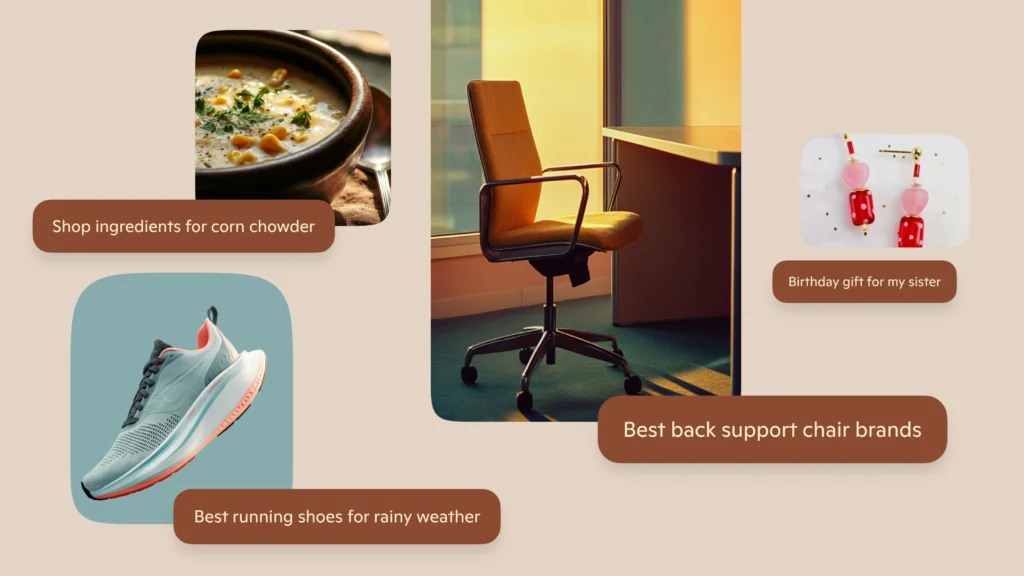

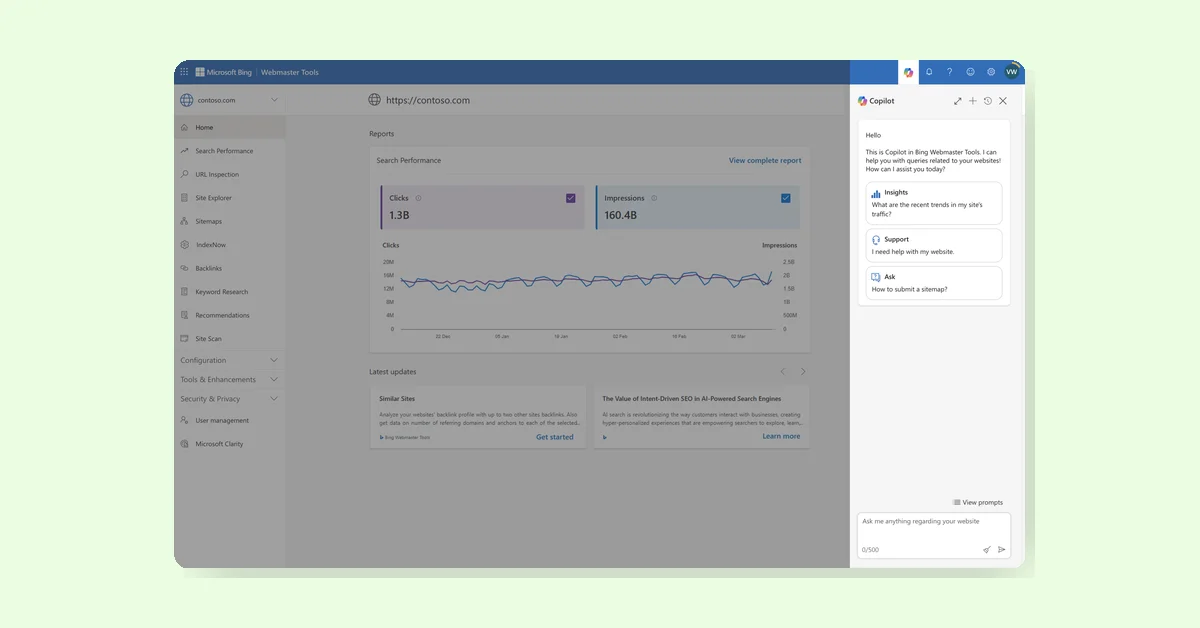

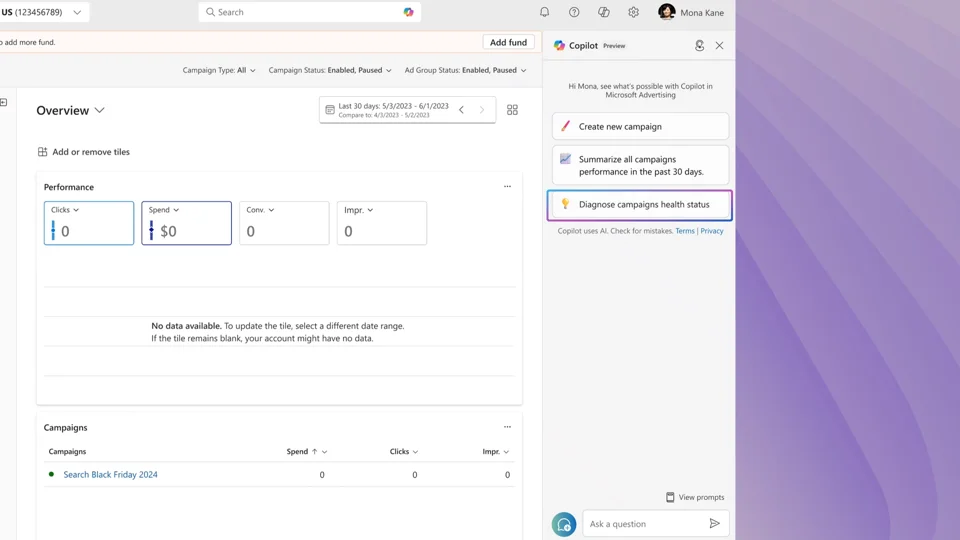

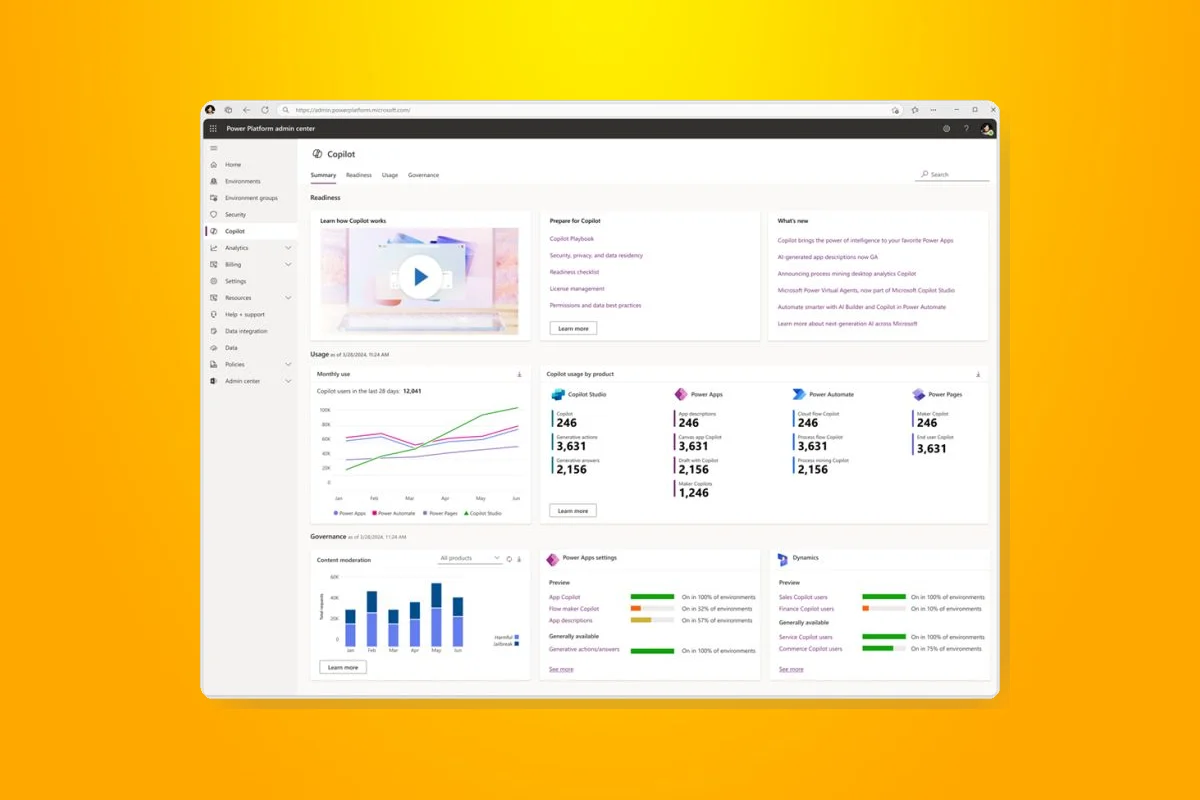

Microsoft researchers found AI Recommendation Poisoning, where companies embed hidden instructions in AI prompts to bias assistants toward their products. This memory poisoning makes AI remember certain sources as trusted, affecting recommendations in health, finance, and more. Microsoft deployed mitigations in Copilot, including prompt filtering and memory controls. Users should be cautious with AI links and check stored memories to avoid manipulation.